|

6/19/2023 0 Comments Lzip vs xz vs lzop

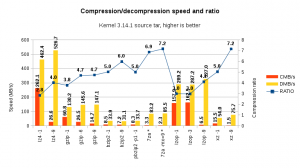

I copied all files from /bin and /usr/bin, created a gpg encrypted file of a big document and added a copy of grml64-small_2010.12.iso. A collection of files in human-not-readable format. To test the different algorithms I collected different types of data, so one might choose a method depending on the file types. The best results are of course located near to the origin. To visualize the results I plotted them using R, compression efficiency at X vs. lzop: imilar to gzip but favors speed over compression ratio, cmd: tar -lzop -cf $1. $1Īll times are user times, measured by the unix time command.lzma: Lempel-Ziv-Markov chain algorithm, cmd: tar -lzma -cf $1. $1.lha: based on Lempel-Ziv-Storer-Szymanski-Algorithm (LZSS) and Huffman coding, cmd: lha a $1.pack.lha $1.rar: proprietary archive file format, cmd: rar a $1.pack.rar $1.zip: analogous to a combination of the Unix commands tar(1) and compress(1) and is compatible with PKZIP (Phil Katz’s ZIP for MSDOS systems), cmd: zip -r $1.pack.zip $1.bzip2: uses the Burrows-Wheeler block sorting text compression algorithm and Huffman coding, cmd: tar cjf $1.2 $1.gzip: uses Lempel-Ziv coding (LZ77), cmd: tar czf $1. $1.I’ve chosen some usual compression methods, here is a short digest (more or less copy&paste from the man pages): But all in all this might give you a feeling for the methods. Just have a look at the parameter -1.-9 of zip. This is nothing scientific! I just took standard parameters, you might optimize each method on its own to save more space or time. It was immediately answered with something like: What are you doing? I had to boot linux to open the file!įirst of all I don’t care whether user of proprietary systems are able to read open formats, but this answer made me curious to know about the differences between some compression mechanisms regarding compression ratio and time. I recently wrote an email with an attached LZMA archive.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed